New_src_count: how many of those sources have only been in a single day in the past 30.Src_count: how many total sources a user has been seen from in an hour.| stats dc(src) as src_count dc(eval(if(day_count=1, src, null()))) as new_src_count by user _time Count how many days a user src combination has been seen.| eventstats dc(day) as day_count by user src Normalize user, lowercasing and pulling just user from where lower(src)!=lower(user_domain).| eval user=lower(mvindex(split(user, 0)) For src values that are IPs replace src with src_nt_host from asset data if it exists.| eval src=lower(if(match(src, “(”) AND isnotnull(src_nt_host), mvindex(src_nt_host, 0), src)) Pull in Splunk assets to get src hostname (src_nt_host).Remove datamodel from field names and rename dest_nt_domain to be more accurate.| rename Authentication.* as * dest_nt_domain as user_domain Grouping by user src and dest_nt_domain which contains the user’s domain.Removing events with unknown an irrelevant data.Focusing on Windows authentication 4624 events.Tstats to quickly look at 30 days of data.| tstats `summariesonly` count from datamodel=Authentication where Authentication.signature_id=4624 NOT er=”-” NOT er=”ANONYMOUS LOGON” NOT er=”unknown” NOT Authentication.src=”unknown” by er Authentication.src st_nt_domain _time span=1h

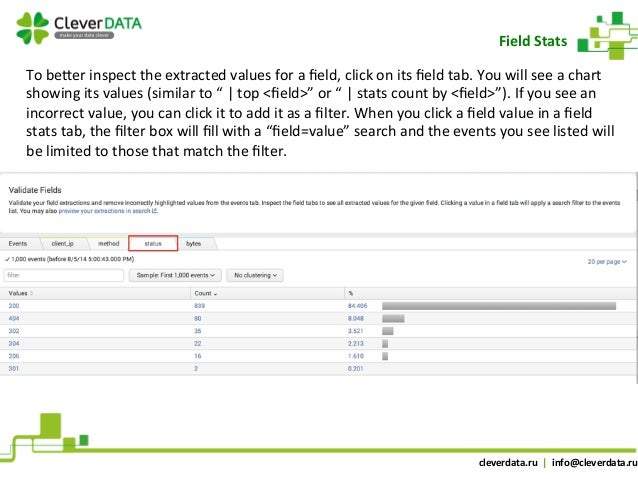

Using streamstats we can put a number to how much higher a source count is to previous counts: If the source count was significantly higher than any previous source counts I would consider it anomalous. Using streamstats to get neighboring valuesĪs an alternative to MLTK, I use streamstats to mimic how I–as an analyst–investigate an alert.įor our example of a user being seen logging in from an anomalous number of sources, I would start by looking at historical source counts over the past 30 days. If identity data in Splunk for different types of users is high quality, reflects different usage patterns, and there are less than 1024 of them then MLTK may be the direction to go. Unfortunately, outside of editing config files and making sure you have enough processing power, the DensityFunction is limited to 1024 groupings and 100,000 events before it starts sampling data. This algorithm is meant to detect outliers in this kind of data. One of the included algorithms for anomaly detection is called DensityFunction. Splunk’s Machine Learning Toolkit (MLTK) adds machine learning capabilities to Splunk. It’s getting even worse because more events aren’t getting buried by high counts during certain hours of day. Requiring more than 15 data points, there are 14,298 results. The distribution of source count is an exponential distribution: One example would be if we were looking for users logging in from an anomalous number of sources in an hour. This means more data equals more outliers equals more alerts. Standard deviation can be used to find outliers but a certain percentage of data will always be seen as outlier. In security contexts, user behavior is most often an exponential distribution, low values being commonly seen with high values being more rare. Using standard deviation to find outliers is generally recommended for data that is normally distributed.

Standard deviation measures the amount of spread in a dataset using the value’s distance from the mean. I will also walk you through the use of streamstats to detect anomalies by calculating how far a numerical value is from its neighbors. In this tutorial we will consider different methods for anomaly detection, including standard deviation and MLTK. Standard deviation, however, isn’t always the best solution despite being commonly used. Detecting anomalies is a popular use case for Splunk.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed